Most risk isn’t visible in what applicants submit. It’s visible in how they behave while they’re submitting it. ForMotiv turns in-session behavioral data into real-time conversion and risk decisions—so you can grow more confidently without compromising your book.

Growth demands less friction. Fraud prevention demands more.

The same improvements that drive digital conversion also drive misrepresentation and fraud. Fewer questions, less friction, faster bind — better for applicants who are honest, easier for the ones who aren’t.

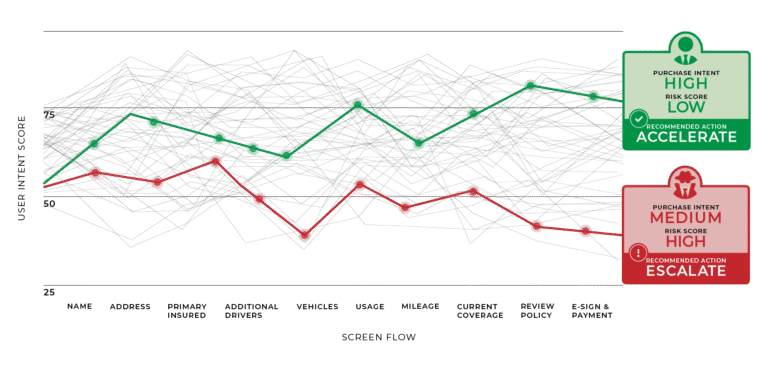

ForMotiv captures what the optimized application can’t: proprietary digital body language data on every session — hesitation, corrections, edit patterns, timing — turned into real-time risk and conversion decisions during the underwriting flow on both direct and agent channels.

Trusted by a majority of the top 10 U.S. carriers to improve decisions from quote to bind to claims.

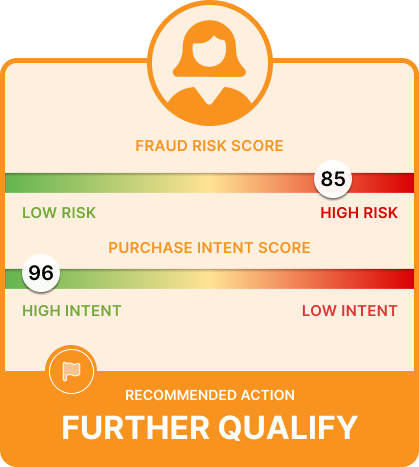

By capturing proprietary behavioral, device, and network data, ForMotiv delivers real-time Behavioral Intelligence that enables carriers to dynamically tailor digital journeys to each user’s purchase intent and risk profile. Simultaneously improving conversion and user experience while reducing underwriting risk and fraud

Improve Conversion and Monetization with Real-Time Intent Scoring

Prevent Leakage & Underwriting Risk Before Policy Issuance

Detect and Stop Bots, Ghost Brokers, Fraud Rings, and Agentic AI in real time.

Access Hundreds of Proprietary Behavioral Features via real-time API

Pinpoint Exactly Where Applicants and Agents Encounter Friction

Benchmark Agent & Agency Behavior, Detect Gaming, and Improve Agent Channels

Is your applicant high-intent because they bought a car this morning — or because they got in an accident this morning?

ForMotiv is the only solution that tells you the difference, in real time, before you bind the policy. Two applications with the same submitted answers can represent very different risks depending on how they were completed. The first fills out the application diligently and honestly. The second adds a driver, sees a quote, removes the driver but not a vehicle, edits their garaging address, and lowers their mileage. Same answers. Different story. Different impact on your loss ratio.

ForMotiv doesn’t add friction. It applies intelligence — directing the right action (approve, flag, verify, intervene, decline) to the right applicant or agent session, at the moment the outcome can still be influenced.

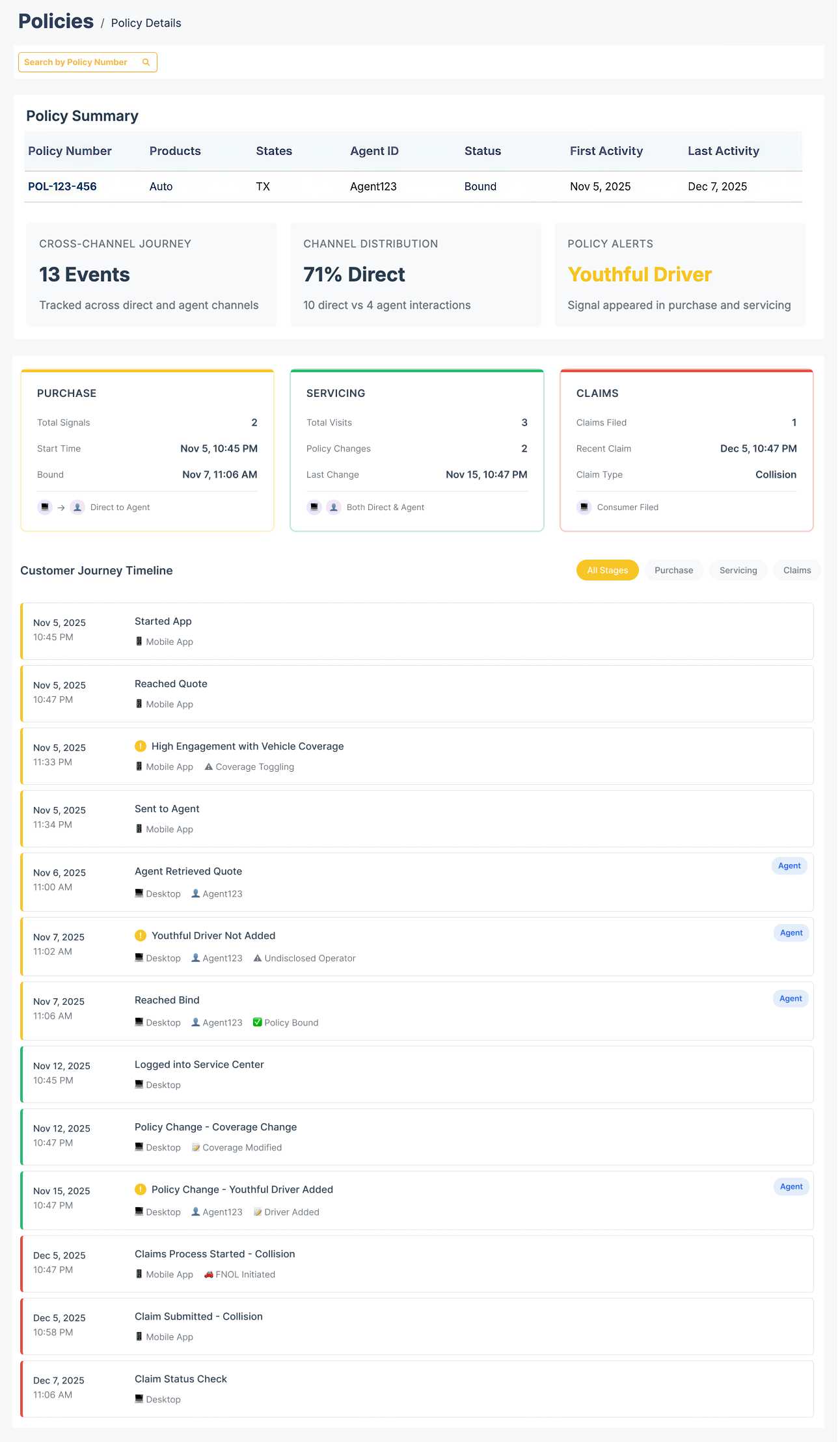

One continuous behavioral thread. ForMotiv stitches D2C, Agent, Call Center, Servicing, and Claims into a single, unified customer view.

Cross-channel session linking. An agent or call center rep can see exactly what a customer did — where they got stuck, associated risk signals, coverage toggling, etc. — before they even say hello.

Behavioral memory, quote to claim. Longitudinal profiles surface pre-bind risk signals during policy admin, renewal, and claim time — closing the loop on every policy.

A lightweight JavaScript tag. No performance impact. IT teams live in weeks, not quarters.

Every signal, model, and behavioral feature is API-accessible in real time. No black box. Full carrier control over how outputs are used.

Proprietary, predictive dataset captured directly from your applicants and agents.

Behavioral features refined against actual policy outcomes — conversions, claims, fraud, and more — across hundreds of millions of sessions.

GDPR, CCPA, and PIPEDA compliant. No personal information captured or stored.